AI Product Design · 2024

ModuMind

See your mind. Shape it.

AI Product Design · 2024

See your mind. Shape it.

AI Product Design

AI / LLM

3D / WebGL

UX Design

Psychology

Three.js

Claude SDK

Product Design · 3D · AI

2024

A therapeutic tool that turns limiting mental patterns into 3D scenes you can physically transform. AI-guided conversation walks you from describing a stuck belief to embodying its alternative — with the visualization shifting in real time as you work through it.

See the websiteStack

The kit that built this project — from research to deploy. AI tools called out separately because they shape how the work gets made, not just what it's made of.

AI

Design

Code

Infrastructure

Cognitive Behavioral Therapy works by identifying limiting thoughts and reframing them. But 'reframing' is one of the most abstract verbs in mental health — easy to say, hard to feel. The clinical literature is full of evidence that reframes which stay in language stay in language, while reframes that get embodied — through writing, drawing, role-play, somatic work — actually shift behavior. ModuMind asks: what would it look like if a digital tool offered embodiment without requiring a therapist in the room?

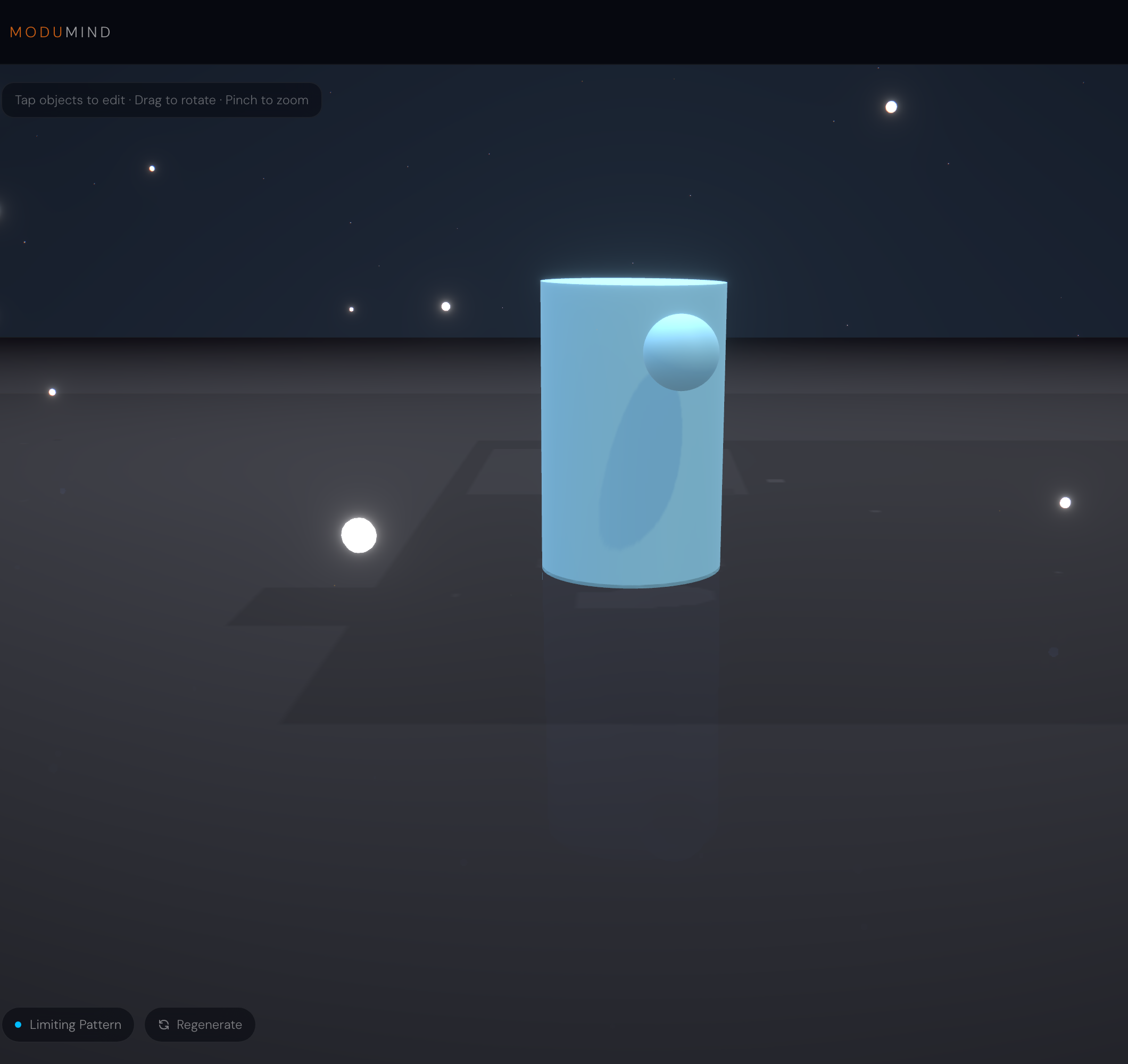

Your pattern becomes a generated 3D scene. You describe what you're feeling in your own words, Claude reads it and gives the pattern a name and a category, you confirm or refine, then the AI generates a visual brief — imagery, colors, texture — and renders the scene. From there it becomes interactive: you can describe modifications in plain language ('make the room brighter, add a window') and the scene updates as you work through the reframe with a guided AI conversation.

Four UI screens move you from a sentence to a generated scene. Captured directly from the live deployment at modumind-app.vercel.app — the prompt was 'I always feel like I'm on trial in my relationships. I'm convinced everyone is judging me.'

01 — Landing

Atmospheric warmth — orange-cream gradients, serif headline, the four-step process visible immediately. The five chips ('3D Visualization', 'AI Reframing', etc.) exist to set expectations: this is not a chatbot.

02 — Pattern intake

Open prompt with four starter examples for users who feel stuck. These aren't placeholders; they're an offered vocabulary for people who don't have one yet.

03 — Here's what I see

Claude reads the entry and returns a named pattern ('The Courtroom Mind') with a description that mirrors the user's exact framing, plus a category and intensity reading. Every field is editable.

04 — AI imagines the visual

Claude composes a three-part visual brief — imagery, colors, texture — written like a film treatment. The brief is the bridge between language and image.

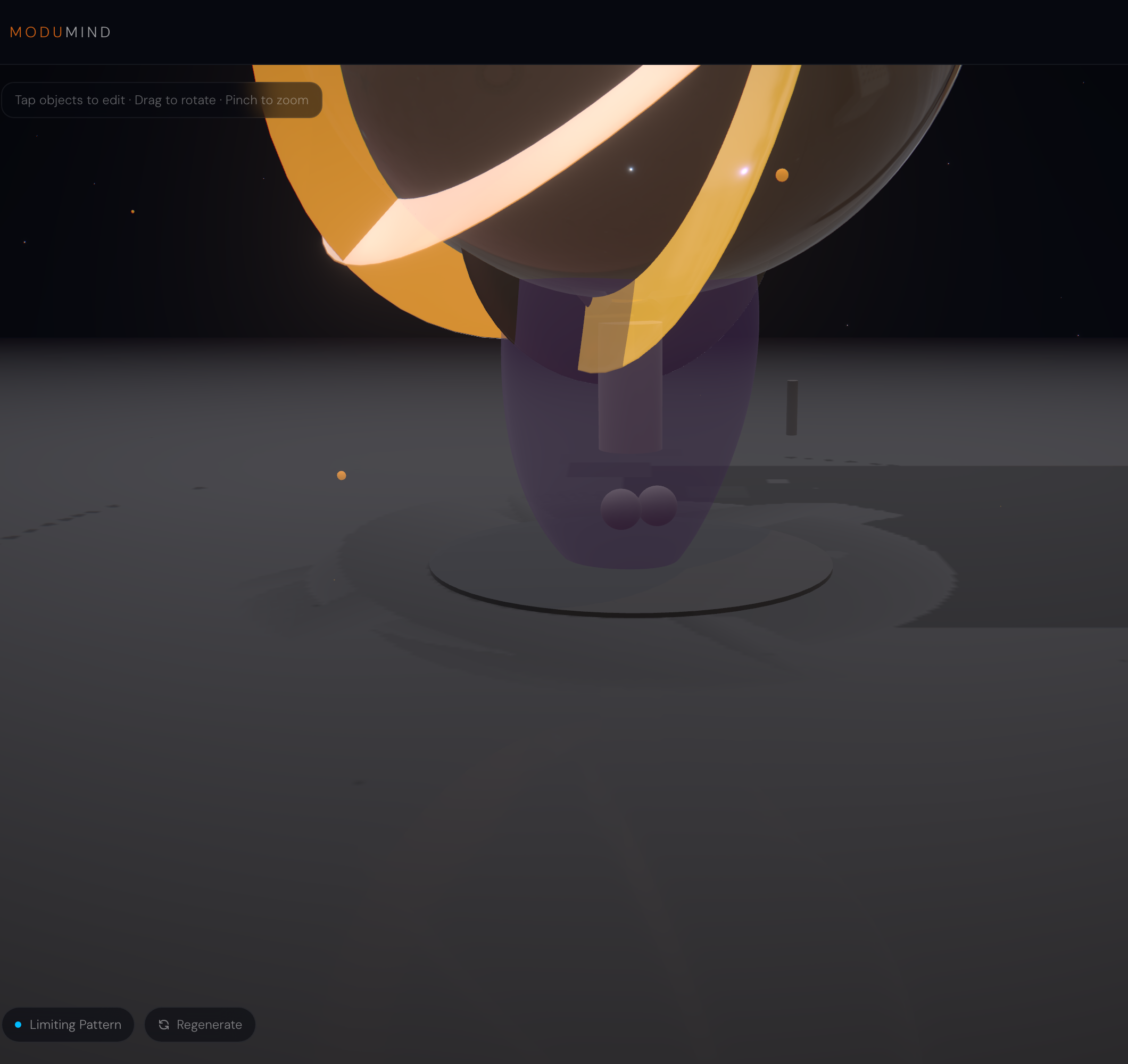

The scene the AI generated for the courtroom prompt: a constrained brass sphere with chained appendages, pinned between cold concrete pillars under a dim spotlight. The visual is doing the work — you can point at the chains.

The defining interaction: once a scene exists, you describe what should change in plain language and the scene re-generates. Below are two real patterns I ran through the live app, each with the original generated scene and the version produced after a single chat modification.

Pattern prompt

“I always feel like I'm on trial in my relationships. I'm convinced everyone is judging me.”

User modification (typed in chat)

“open the doors, fill the room with warm sunlight, replace the empty pews with a circle of friends”

Same scene engine, two states. The AI's response in chat was: 'What a beautiful transformation you've just created! The doors are now open, warm sunlight floods in, and you're surrounded by a circle of friends instead of empty judgment seats. As you look at this new scene, what does it feel like to imagine being in the center of that circle?' The reframe is no longer abstract — it's visible.

Pattern prompt

“I feel like I'm carrying everyone's expectations on my shoulders. The weight is crushing and I can't put it down.”

User modification (typed in chat)

“let me set the weight down on the ground, replace the figure straining with a person standing tall and free with open hands”

A small purple figure crushed under a heavy curved structure becomes a glowing standalone figure with a companion orb floating beside it. The weight is gone, but the figure isn't alone — that subtlety came from the user's own modification, not from the AI's first instinct.

The user types whatever comes out. Claude reads it once and returns a named pattern (here: 'The Courtroom Mind'), a mirroring description in the user's own framing, a category (Relationship Patterns / Performance Anxiety / Self-Worth / Need for Control / Fear & Avoidance / Comparison & Competition), and an intensity reading. Every field is user-editable — the AI is offering, not deciding.

Once the pattern is confirmed, Claude composes a three-part brief — imagery, colors, texture — written like a film treatment. For 'The Courtroom Mind' it returned: 'a lone figure standing in the center of a vast, dimly lit courtroom, surrounded by empty wooden benches that somehow still feel occupied — shadows pooling in the seats like silent witnesses, a single harsh spotlight illuminating the figure white,' colors 'deep mahogany brown, cold ivory white, muted charcoal grey,' texture 'stiff and unyielding, like starched formal clothing worn too tight.' The brief is the bridge between language and image.

The brief feeds an image generation step that produces the actual 3D scene. The user can then describe modifications in plain language — 'make the cage wider', 'change the color to blue', 'add a window' — and the scene re-generates. This is where the abstract reframe becomes physical: the visual moves as the user moves through the conversation.

The right-hand panel hosts the guided transformation conversation. Claude's default tone is too eager — quick to suggest alternatives, quick to fix. The system prompt is tuned to do the opposite: ask, listen, sit with, only offer when asked. The job is to help the user notice, not to coach. Before/After tabs let the user toggle between the original scene and the modified one as they work.

The pattern-naming step is where the product clicks. Claude reads a single sentence ('I always feel like I'm on trial in my relationships') and returns a poetic, accurate name with a description that mirrors the user's own framing. Naming a pattern is the first move in any therapy modality, and Claude does it well enough that the rest of the experience earns the user's trust before the visual even loads.

The image generation is the slow link. On the live deployment, scene rendering takes 30–60s and sometimes fails outright (the screen says 'Failed to generate scene' and prompts a retry). This is the trade for scenes that actually feel custom — the alternative is a small library of pre-built metaphors that never quite match the user's specific words. The current design accepts the latency as the price of personalization.

© 2025 Hanna de Vries